Key Takeaways

-

Architectural Mismatch: Microcontrollers are fundamentally ill-suited for the rigorous real-time timing and parallelism required by true high-speed DAC applications.

-

Hidden Costs: The apparent 'cost-saving' of using an existing microcontroller often gets eclipsed by increased development time, complex debugging, and compromised reliability.

-

Resource Strain: Achieving higher speeds on a microcontroller typically demands extensive processor cycles, interrupts, and DMA channels, leaving little headroom for other system functions.

-

Scalability & Robustness Concerns: Solutions reliant on 'tricks' to boost DAC speed are inherently fragile, difficult to scale, and prone to issues in industrial or mission-critical environments.

-

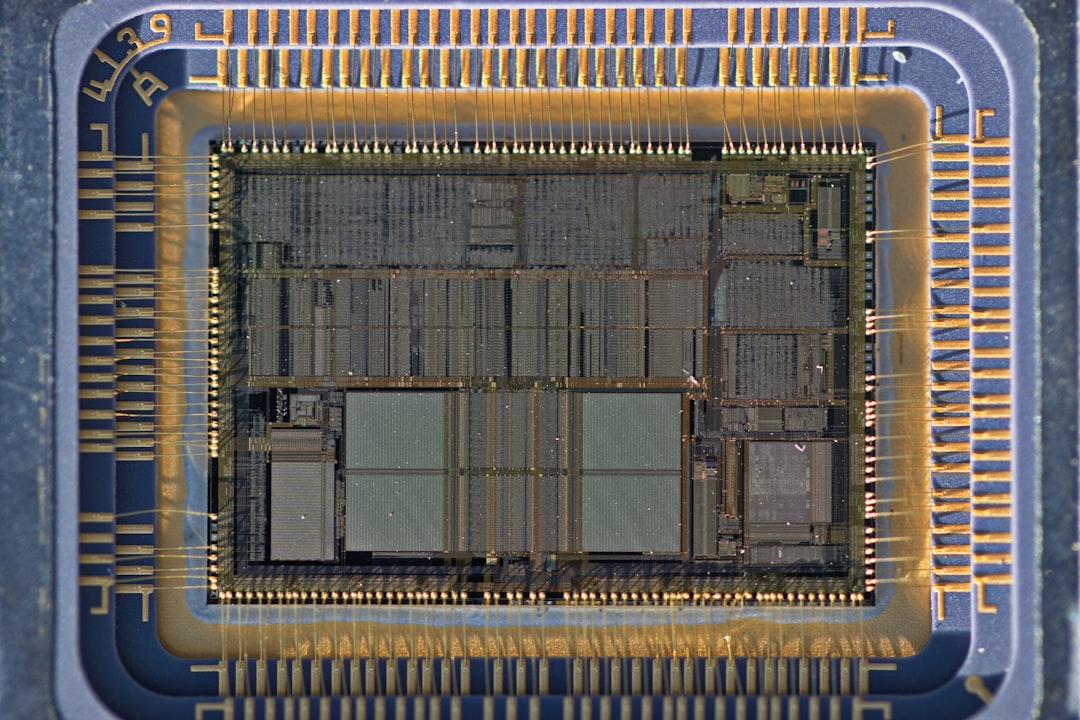

The FPGA Advantage: For genuine high-speed, deterministic DAC output, dedicated hardware like Field-Programmable Gate Arrays (FPGAs) remain the industry benchmark for their unparalleled performance and reliability.

Main Analysis: The Perils of Pushing Limits

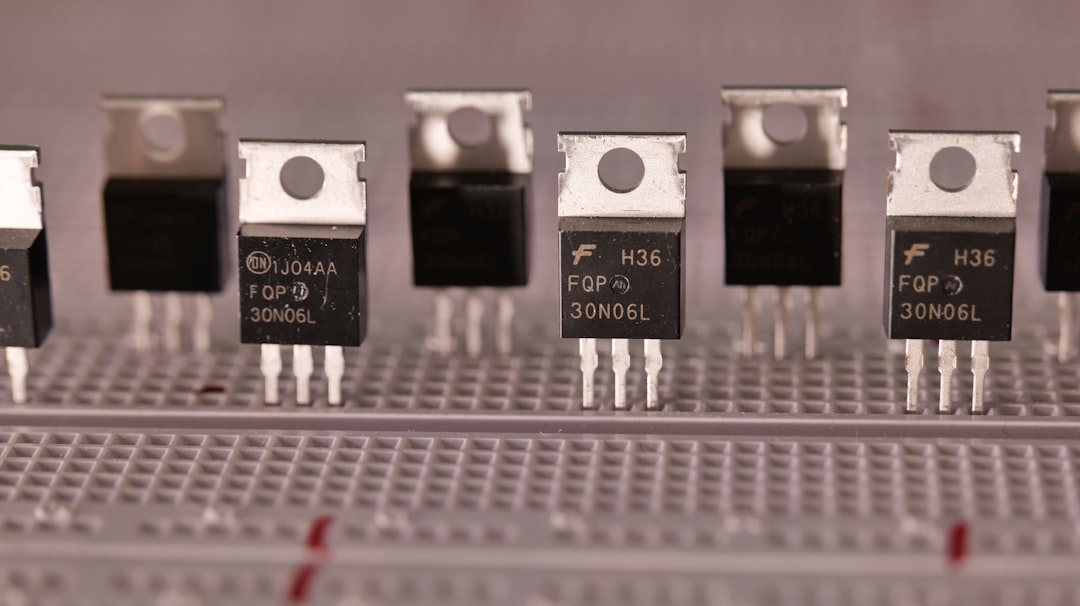

Across India's burgeoning tech landscape, from Bangalore's startups to Pune's manufacturing hubs, engineers are constantly seeking cost-effective solutions to complex problems. One such enduring quest involves squeezing maximum performance out of readily available components. The notion of driving a Digital-to-Analog Converter (DAC) at blazing speeds using a standard microcontroller – a device designed for sequential processing, not parallel high-frequency data streams – epitomises this drive. While technically fascinating, it's a pursuit fraught with more peril than practical advantage, a classic example of optimising for the wrong metrics.

The Allure of 'Good Enough' vs. Engineering Reality

The appeal is understandable: leverage an existing microcontroller, avoid the perceived complexity and cost of FPGAs, and deliver a 'fast' DAC output. However, this often translates to a system teetering on the edge of its operational limits. Microcontrollers, with their CPU-centric architectures, inherent interrupt latencies, and bus contention, struggle to maintain the precise, deterministic timing critical for high-fidelity DAC output. Unlike FPGAs, which can create dedicated, parallel hardware paths for data flow, microcontrollers must juggle multiple tasks, leading to jitter and inconsistencies that degrade signal quality.

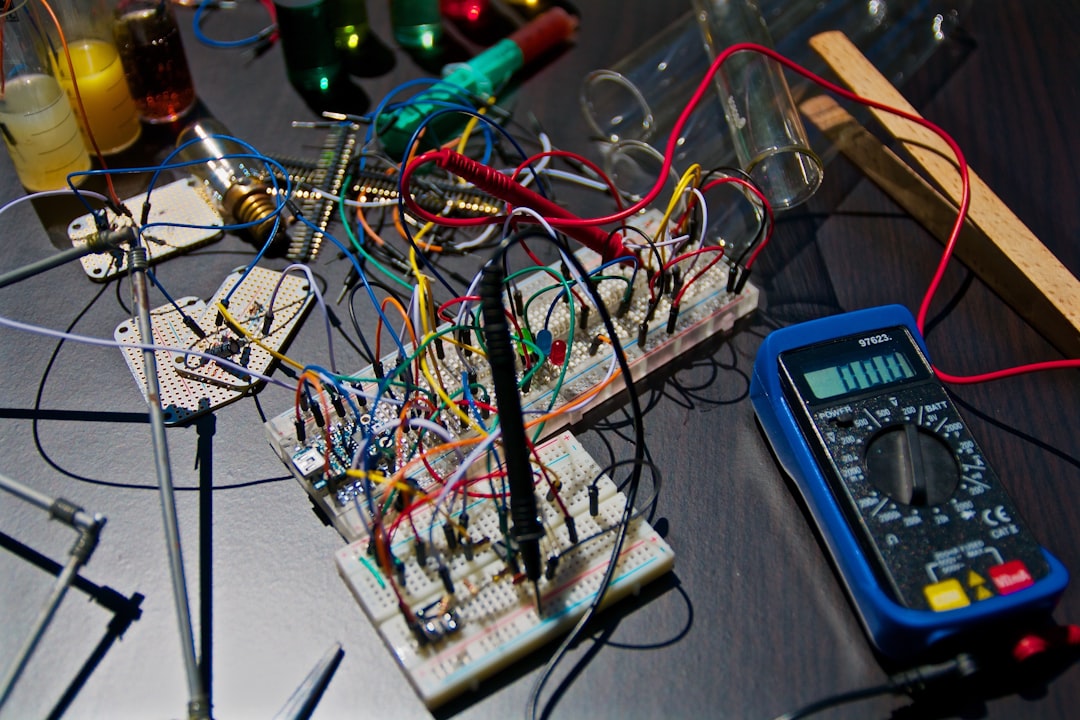

Consider the operational reality. To achieve high sample rates, a microcontroller must dedicate a substantial portion of its processing power, often relying on direct memory access (DMA) controllers and intricate interrupt routines. This isn't a 'clean' solution; it's a high-wire act where a single mis-timed interrupt or a competing peripheral request can throw the entire DAC output into disarray. For applications requiring high precision or spectral purity – be it in telecommunications, medical imaging, or advanced industrial control – such instability is simply unacceptable.

The Hidden Costs of 'Optimised' Microcontroller Solutions

The initial cost savings of foregoing an FPGA might seem attractive on paper, especially for smaller projects or educational exercises. Yet, this often proves to be a false economy. The engineering effort required to develop, debug, and validate a high-speed DAC solution on a microcontroller is considerable. Engineers spend countless hours optimising assembly code, tuning peripheral settings, and hunting down elusive timing bugs that would be non-existent in an FPGA-based design. This translates directly into higher labour costs and extended development cycles, a critical concern for any project manager under pressure.

Furthermore, such highly optimised, resource-intensive microcontroller code is inherently less portable and harder to maintain. Any future modification to the system, or an upgrade to a different microcontroller, could necessitate a complete overhaul of the DAC driving logic. This lack of modularity and robustness runs counter to modern industrial best practices, where long-term maintainability and upgrade paths are paramount.

The argument for microcontrollers often rests on accessibility and ease of programming. While true for general-purpose tasks, pushing them into the domain of high-speed, parallel processing demands a level of expertise that negates much of this perceived simplicity. Engineers are forced to become experts in low-level hardware intricacies, rather than focusing on the higher-level application logic – a significant diversion of valuable intellectual capital.

The Industrial Imperative: Reliability Over Novelty

For critical applications in India's industrial sector – think precision manufacturing, energy management, or advanced instrumentation – reliability is not a luxury; it's a fundamental requirement. A DAC output that fluctuates due to CPU load, unpredictable interrupt service routines, or thermal variations is a ticking time bomb. FPGAs, by contrast, offer predictable, hardware-timed performance, making them the default choice for environments where failure is not an option. The ability to guarantee deterministic timing, even under varying system loads, is the cornerstone of robust industrial design.

Public Sentiment

Synthesized from various engineering forums and industry discussions, a critical consensus emerges:

-

"It's an interesting academic exercise, but anyone deploying this in a real industrial product would be asking for trouble. Latency on a microcontroller is simply too unpredictable for critical DAC applications."

-

"We spent months trying to get a stable 10 MSPS out of a high-end ARM M7, only to scrap it for a tiny CPLD. The 'savings' were imaginary, and the headaches were very real."

-

"Why complicate things? If you need speed and precision from a DAC, you use an FPGA. Microcontrollers have their strengths, but this isn't one of them."

-

"In the Indian context, where every rupee counts, the initial hardware cost saving might tempt. But the long-term support and debugging costs for such a hack quickly erode any perceived benefit."

Conclusion

While the ingenuity displayed in making a microcontroller drive a DAC 'real fast' is commendable, it's crucial for engineers and decision-makers in India to critically evaluate the true implications of such an approach. What appears as a clever workaround often introduces systemic fragility, elevates development complexity, and ultimately sacrifices the reliability that modern industrial and high-performance applications demand. For genuine high-speed, deterministic DAC output, FPGAs and purpose-built DAC controllers remain the proven, robust, and ultimately more economical solutions. Embracing the right tool for the job, rather than forcing a square peg into a round hole, is not just good engineering – it's essential for building sustainable, high-quality technology ecosystems.