The digital age promised unparalleled access to information, yet a recent case involving millions of government documents has exposed a stark reality: much of our digital data remains stubbornly inaccessible. Last November, the House Oversight Committee released 20,000 pages from the Jeffrey Epstein estate. Soon after, the Department of Justice followed suit, unleashing more than three million additional files. The common denominator? All were PDFs, and critically, many were virtually unsearchable.

Luke Igel, among others, found himself sifting through garbled email threads and navigating what he described as a “gross” PDF viewer. While the Department of Justice had employed optical character recognition (OCR), its quality was reportedly poor, rendering vast swathes of text functionally inert for digital query. This scenario didn't just pose an inconvenience; it presented a significant barrier to transparency, journalistic inquiry, and the critical legal process of tracing connections within a high-profile case.

Key Takeaways:

-

Scale of the Problem: Millions of government documents, often in PDF format, are being released with inadequate search capabilities.

-

OCR Limitations: Current optical character recognition technology, even when applied, frequently fails to deliver truly searchable text for complex, historical, or low-quality scans.

-

Impact on Transparency: The inability to effectively search these archives severely hampers public oversight, journalistic investigation, and legal discovery.

-

Technological Gap: There is a pressing need for more sophisticated AI and data processing tools specifically designed to handle and contextualize large, disparate document sets.

-

Systemic Challenge: The issue highlights a broader systemic failure in how government agencies manage and disseminate digital public records.

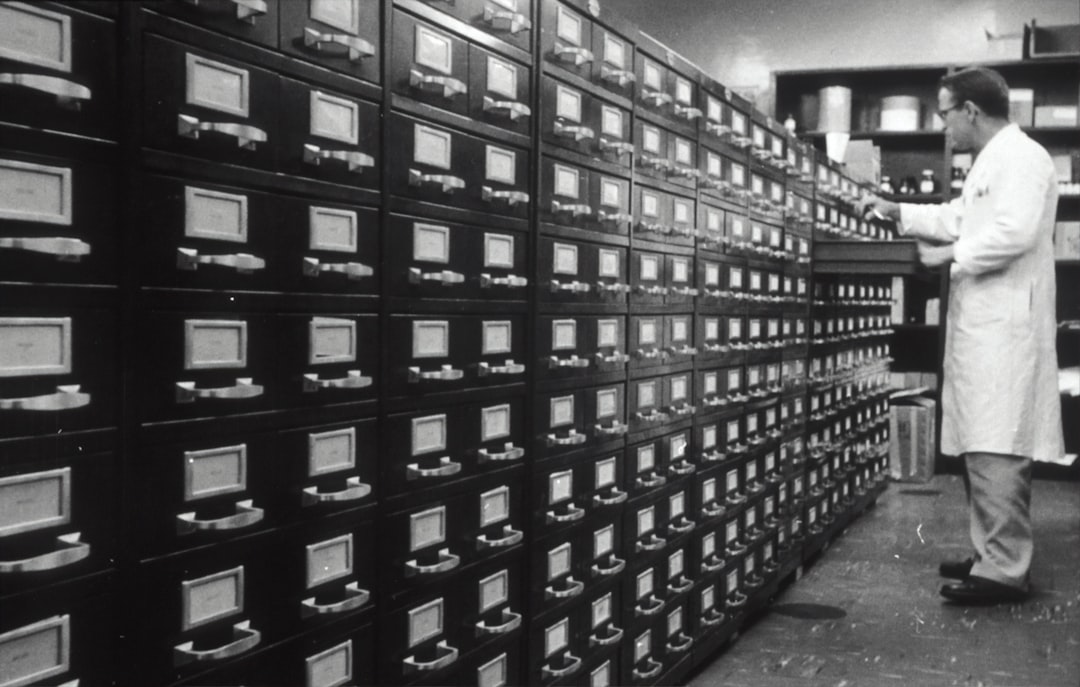

The Digital Information Paradox

For decades, the transition from paper to digital was lauded as the solution to archival challenges. Documents could be stored compactly, shared instantly, and accessed globally. Yet, as the Epstein case vividly illustrates, merely digitizing a document into a PDF does not automatically render it intelligent or accessible. The quality of the underlying data—how well it's structured, indexed, and processed—is paramount. When OCR fails, a digital document becomes little more than a high-resolution image, requiring manual, page-by-page review, a task that becomes impossible with millions of pages.

This paradox is particularly acute in legal and governmental contexts where information volume is immense and accuracy is non-negotiable. The 'discovery' phase of any significant legal case often involves reviewing hundreds of thousands, if not millions, of documents. Without robust search and analytical tools, this process becomes prohibitively expensive, time-consuming, and prone to human error.

AI's Role: Promises and Present Limitations

The promise of Artificial Intelligence (AI) in document analysis is immense. Tools like advanced OCR, natural language processing (NLP), and machine learning can theoretically extract entities, identify relationships, and summarize content from vast datasets. However, the reality, as demonstrated by the Department of Justice's attempts, often falls short, especially when confronted with the 'messiness' of real-world data.

The challenge lies not just in recognizing characters, but in understanding context, handling varied document layouts, deciphering handwritten notes, and connecting disparate pieces of information across millions of pages from different sources. The 'garbage in, garbage out' principle applies rigorously here: if the initial OCR is flawed, subsequent AI tools, no matter how advanced, will struggle to perform meaningful analysis. This necessitates a new generation of tools that are more resilient to imperfect inputs and capable of semantic understanding, moving beyond simple keyword matching to conceptual relevance.

Public Sentiment: A Call for Better Governance and Tools

The frustration expressed by Luke Igel and others is widely echoed. The public expects transparency, particularly in cases of such national importance. The inability to navigate easily through public records breeds distrust and fuels speculation. Experts in data science and public policy are increasingly vocal about the need for governmental investment in superior digital infrastructure and intelligent document processing systems.

As one commentator noted, “We're sitting on a goldmine of information, but it’s buried under a mountain of digital sand. We need better shovels, and more importantly, better maps.” This sentiment highlights a demand not just for technology, but for a proactive approach to information governance that anticipates the scale and complexity of modern data releases.

Conclusion

The episode with the Epstein documents serves as a potent reminder that the digital frontier is still riddled with fundamental challenges. True transparency and effective oversight in the 21st century demand more than just digitization; they require intelligent processing, robust search capabilities, and a commitment to making information genuinely accessible. As AI technologies continue to evolve, their application in public record management will be a crucial battleground, determining whether democratic principles of transparency can truly keep pace with the ever-expanding volume of digital data. The future of public accountability hinges on our ability to harness these tools effectively, transforming raw data into actionable insight.