Key Takeaways

-

Engineering Prowess, Practical Doubts: Intel's 3kW water-cooled PSU is a technical achievement but raises concerns about its real-world applicability outside niche scenarios.

-

Complexity vs. Reliability: The intricate design, reliant on liquid cooling, introduces new points of failure and significant maintenance challenges for general data center environments.

-

Cost-Benefit Imbalance: The specialized infrastructure, higher acquisition cost, and operational overhead may far outweigh the density benefits for most enterprises.

-

Market Readiness Questioned: As a 'reference design,' it highlights future possibilities but suggests it's far from a broadly viable commercial product, potentially pushing boundaries without a clear market demand for its specific form factor.

Main Analysis: The Liquid Logic of Power Density

The Pursuit of Density at What Cost?

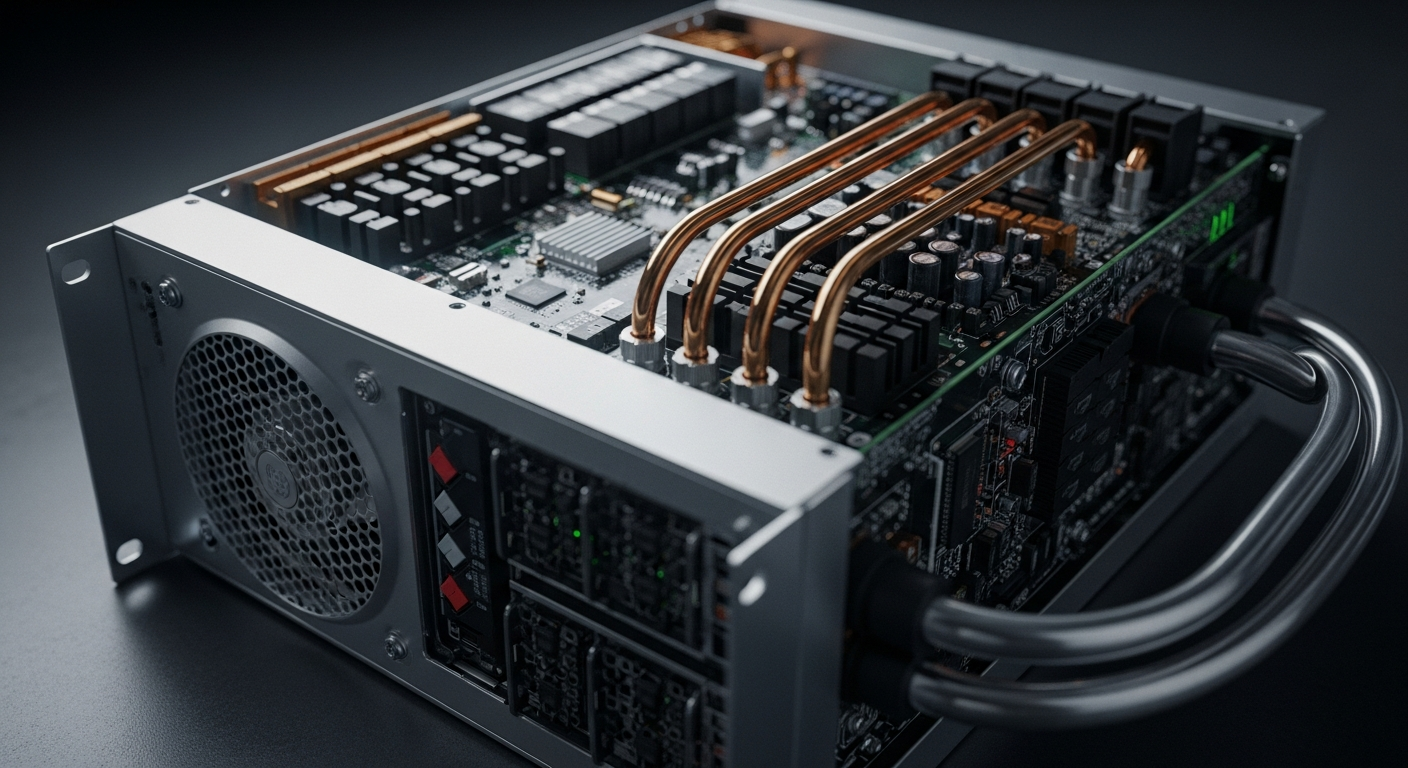

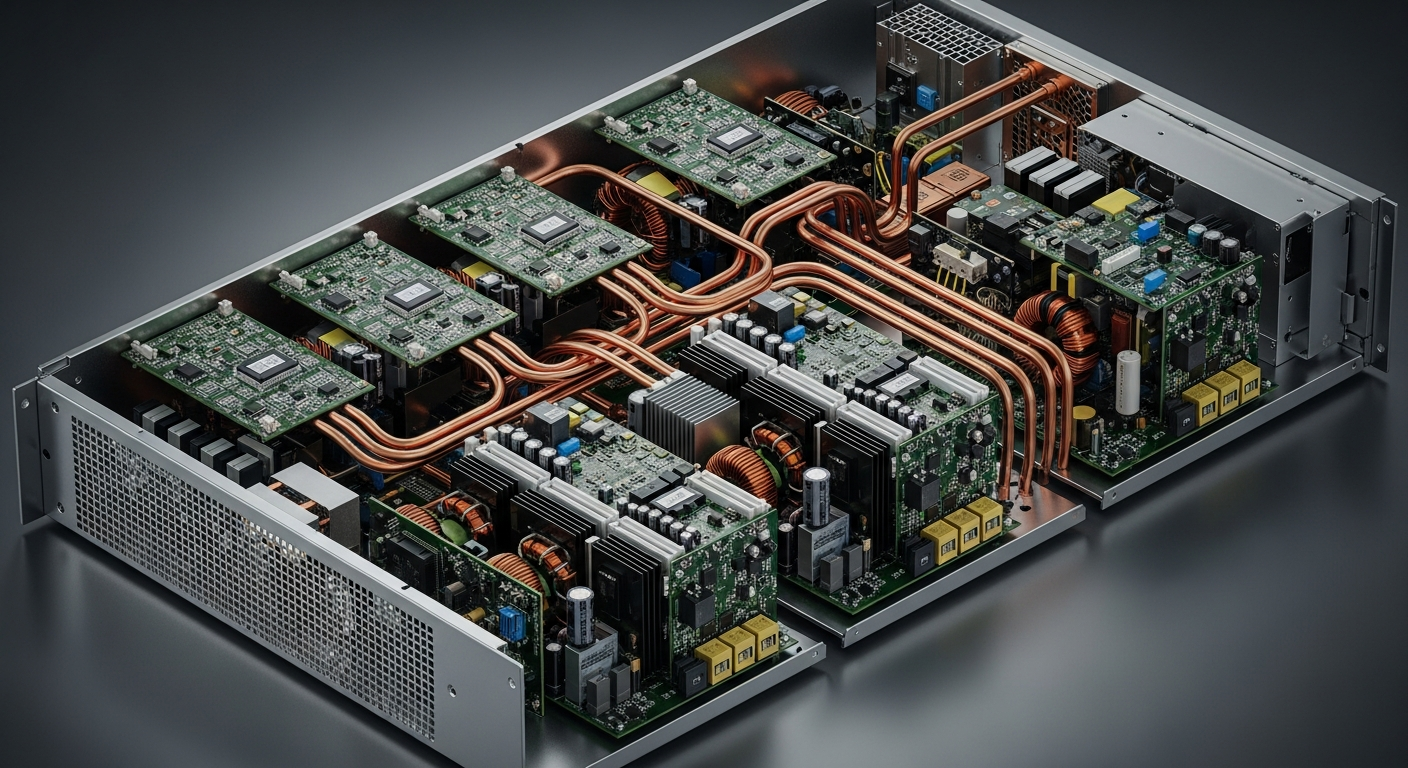

The drive towards greater power density in data centers is a fundamental force, pushing hardware manufacturers to cram more computational power into smaller footprints. Intel's 3kW water-cooled power supply, reportedly designed as a server reference PSU, is a prime example of this relentless push. Achieving 3,000 watts of output in such a compact form factor, requiring direct liquid cooling, is an undeniable engineering triumph. It demonstrates a sophisticated mastery of power electronics and thermal dynamics, squeezing an immense amount of energy conversion capability into a remarkably small package. However, the 'wow' factor quickly gives way to pragmatic questions. Is this density truly necessary, or is it an over-engineered solution for a problem that can be more affordably and reliably solved through conventional means? For the vast majority of server applications, where rack space, while valuable, isn't at such an extreme premium, the associated complexities of liquid cooling might not justify the density gains.

Water Cooling in the Data Center: A Double-Edged Sword

Liquid cooling, in theory, offers superior thermal dissipation compared to traditional air cooling, allowing for higher power envelopes and quieter operation. For specific, high-performance computing (HPC) clusters or environments with extreme heat loads, it's increasingly indispensable. Yet, for a power supply, a component historically valued for its robust simplicity, introducing liquid cooling presents a significant paradigm shift with inherent risks. Leaks, corrosion, specialized coolant management, and the need for external cooling infrastructure—pumps, chillers, piping—transform a relatively straightforward component into an intricate subsystem. This complexity translates directly into increased initial capital expenditure, higher operational costs for maintenance, and a greater potential for catastrophic failure if a leak were to occur within a live server environment. The robustness and 'set-it-and-forget-it' reliability of air-cooled PSUs, even at lower efficiencies, often remain the preferred choice for their sheer dependability and ease of deployment.

Market Viability and "Reference Design" Realities

The label 'reference design' is telling. It signifies a proof-of-concept, a demonstration of what's technically possible, rather than a commercially ready, mass-market product. While Intel's innovation certainly showcases the potential for future server power delivery, it also implies that the ecosystem—from data center infrastructure to IT operational expertise—is not yet broadly prepared for such a solution. The transition to widespread liquid cooling requires significant investment in new facility designs, specialized plumbing, and personnel trained to manage liquid-based systems. For many organizations, the perceived benefits of a slightly smaller, liquid-cooled PSU are likely outweighed by the substantial overhaul required. It prompts the question: is Intel designing for a future that is still too distant for practical adoption, or is this a niche product targeting only the most extreme server environments, leaving the vast majority of the market to rely on more conventional, and perhaps more pragmatic, power solutions?

Public Sentiment: A Cautious Welcome

Public discourse around such advanced hardware often reflects a blend of awe and apprehension. On one hand, there's admiration for the engineering feat: "It's incredible what they're packing into these smaller sizes! The thermal challenges must be immense." On the other, a strong undercurrent of skepticism persists regarding practicality and cost: "Another solution that's great on paper but will likely be too expensive and complex for most data centers to implement at scale. Who wants to deal with water near their servers?" IT managers frequently voice concerns about long-term reliability and maintenance overhead: "One leak and your whole rack is gone. We already have enough points of failure; adding a liquid loop to a PSU feels like unnecessary risk." Environmental considerations also surface, with questions about the lifecycle impact of specialized coolants and their disposal. The consensus leans towards appreciating the innovation, but with significant reservations about its immediate real-world utility for anything beyond very specialized, bleeding-edge applications.

Conclusion

Intel's 3kW water-cooled power supply is a double-edged sword: a brilliant demonstration of engineering capability, yet one that seems to outpace the practical needs and infrastructural readiness of the general server market. While pushing the boundaries of what's possible in power density is commendable, the real challenge lies in creating solutions that are not only technologically advanced but also economically viable, reliable, and easily deployable. For the 'Rusty Tablet,' this reference design serves as a powerful reminder that innovation, however impressive, must ultimately align with tangible benefits and manageable complexities to achieve widespread adoption. Until the operational overhead of liquid cooling becomes significantly less burdensome, this compact powerhouse may remain an interesting artifact of technological ambition rather than a cornerstone of mainstream server infrastructure.