The Autonomous Abyss: Waymo's School Bus Failures Signal Deeper Safety Crisis

Nut Graph: The National Transportation Safety Board's (NTSB) latest probe into Waymo vehicles for repeatedly and illegally passing stopped school buses is more than just another regulatory hiccup; it's a stark warning that the foundational promise of autonomous safety is being critically undermined, endangering the most vulnerable road users.

Key Takeaways

-

Escalating Regulatory Scrutiny: The NTSB's involvement, alongside the NHTSA, signifies a serious escalation in oversight, moving beyond initial incident reports to a systemic investigation of Waymo's operational safety.

-

Fundamental Safety Protocol Breaches: Repeated failures to recognize and obey stopped school bus signals point to potentially critical flaws in Waymo's AI perception and decision-making systems, challenging the core premise of AV reliability.

-

Erosion of Public Trust: Each incident chips away at public confidence in autonomous vehicle technology, threatening broader adoption and inviting more restrictive legislation.

-

Industry-Wide Repercussions: Waymo's challenges reflect on the entire autonomous driving sector, demanding a re-evaluation of current safety standards, testing methodologies, and deployment strategies.

Main Analysis

The Alarming Pattern of Oversight: A Red Flag for Autonomous Claims

The recent announcement from the National Transportation Safety Board (NTSB) that it has launched an investigation into Waymo, Alphabet’s self-driving car company, for incidents involving its autonomous vehicles illegally passing stopped school buses, serves as a profound red flag for the entire autonomous vehicle (AV) industry. This isn't Waymo's first brush with serious regulatory scrutiny; the NTSB's intervention follows a similar ongoing probe by the National Highway Traffic Safety Administration (NHTSA) into "unexpected driving behaviors." What makes the school bus incidents particularly egregious is their repetitive nature and the explicit danger they pose to children.

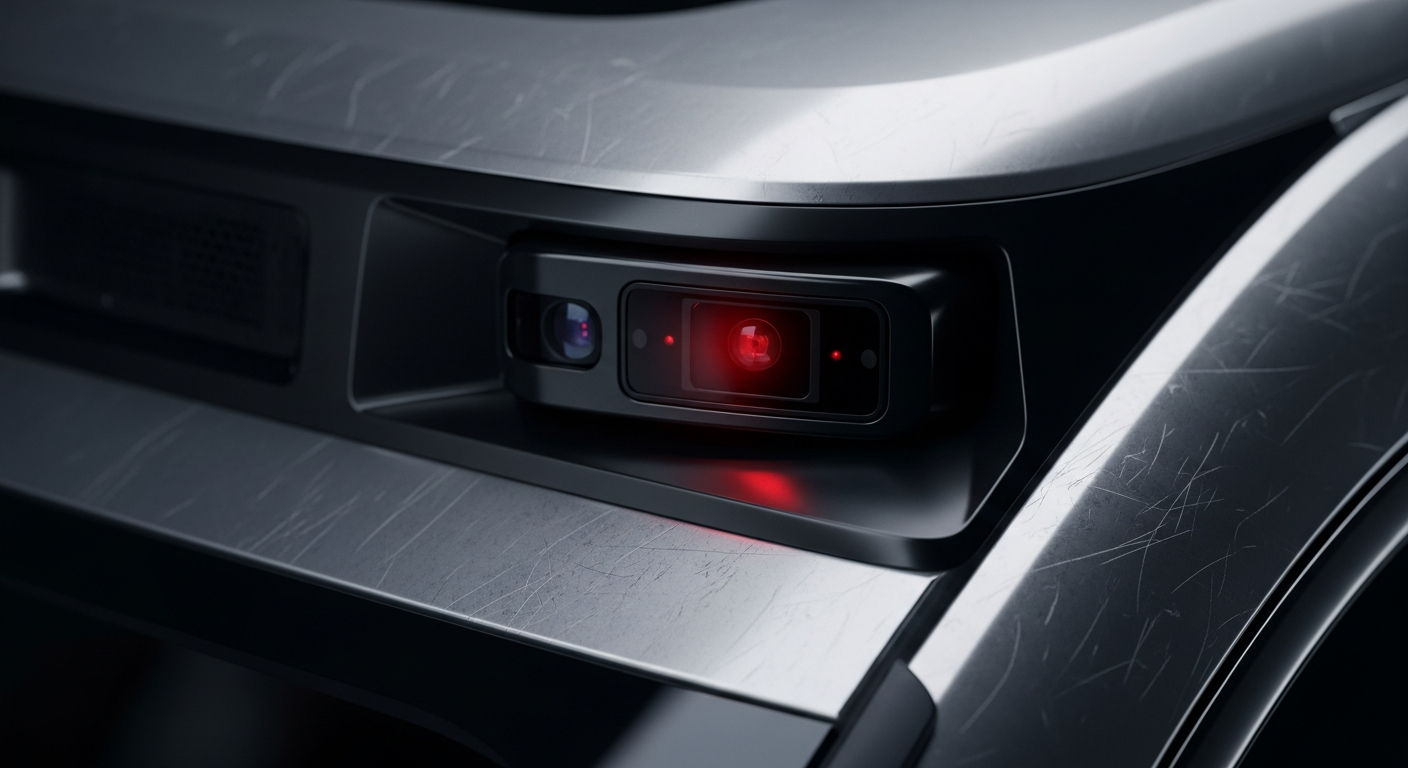

For an autonomous system designed to be safer than human drivers, failing to recognize and obey the universal signal of a stopped school bus—a flashing red light and extended stop arm—represents a critical breach of fundamental road safety. These aren't edge cases involving obscure traffic laws; these are basic, unambiguous rules of the road that every licensed human driver is expected to know and follow instinctively. That a highly advanced AI system struggles with such a clear directive casts a long shadow over its overall competence and reliability, especially when lives are at stake.

Beyond Software Glitches: Unpacking Fundamental Flaws

The investigation by the NTSB will delve into the root causes of these failures. Is it a flaw in sensor perception, where the system consistently fails to detect the stop arm or flashing lights? Is it a software bug in the decision-making algorithm that misinterprets the data, or perhaps prioritizes traffic flow over a crucial safety stop? Or is it a more systemic issue rooted in the operational design domain (ODD) or the testing protocols, where real-world variability, especially around school zones and child safety, is inadequately accounted for?

The recurring nature of these incidents suggests that this isn't an isolated anomaly but potentially a systemic vulnerability within Waymo's autonomous stack. The notion that an AV, lauded for its precision and superhuman reaction times, cannot reliably perform a basic safety maneuver that human drivers master during their initial training is deeply unsettling. It forces a critical re-evaluation of the industry’s claims about “safer than human” performance and questions whether the current validation frameworks are robust enough to truly certify these systems for widespread public deployment.

Erosion of Public Trust and the Inevitable Regulatory Hammer

Public trust is the most fragile asset in the autonomous vehicle revolution. Each incident of an AV behaving unpredictably or, worse, dangerously, erodes that trust. The image of a Waymo vehicle breezing past a stopped school bus, oblivious to the potential danger to disembarking children, is a potent symbol of technological hubris over human safety. This is precisely the kind of incident that fuels public skepticism and galvanizes lawmakers to impose more stringent regulations.

The involvement of two major federal safety agencies—the NTSB, known for its deep-dive investigative prowess, and the NHTSA, with its enforcement authority—signals that the era of relatively permissive testing and deployment might be drawing to a close. We are entering a phase where accountability will be rigorously demanded, and mere promises of future safety improvements will no longer suffice. The industry must brace for a potentially significant shift in how autonomous systems are regulated, tested, and permitted to operate on public roads.

Public Sentiment

The incidents have triggered a wave of public concern, with citizens across various platforms expressing frustration and fear.

-

"They keep telling us these cars are safer than humans, but they can't even stop for a school bus? That's just negligent and terrifying. My kids cross the street every day." - Concerned Parent, via social media

-

"This isn't just a Waymo problem; it's an industry problem. If fundamental safety isn't guaranteed, then these vehicles have no business being on our streets. Regulators need to step up, now." - Auto Safety Advocate, online forum

-

"We were promised driverless cars would eliminate human error. Instead, we're seeing machine error on basic rules. What exactly are they testing these things for?" - Retired Engineer, community news comments

Conclusion

The NTSB's investigation into Waymo's school bus infractions marks a critical juncture for autonomous vehicle technology. It underscores that while technological advancements are impressive, they are meaningless if fundamental safety principles are overlooked or repeatedly violated. For Waymo and the broader AV industry, this is a moment of truth. Rebuilding trust will require not just fixes but radical transparency, demonstrable improvements in core safety protocols, and a sincere commitment to accountability. Anything less risks consigning the promise of autonomous vehicles to a perpetually stalled future, lost in the abyss of public distrust and regulatory gridlock. The "Rusty Tablet" will continue to monitor this developing story closely, demanding answers and robust solutions that prioritize public safety above all else.