Key Takeaways

-

AI agents must explicitly account for real-world constraints like token usage, latency, and tool-call budgets.

-

Cost-aware planning involves generating candidate actions, estimating their costs and benefits, and selecting optimal plans.

-

This approach moves agents beyond simple LLM calls, fostering efficiency and resource awareness.

-

Robust budgeting abstractions and flexible execution paths (local vs. LLM) are critical for practical deployment.

-

The ability to validate planning assumptions against actual spend closes the loop for continuous refinement.

The Myth of Unlimited Resources: Why AI Needs a Budget

For years, the discourse around AI has centered on capabilities – what can it do? But as sophisticated AI agents move from research labs to real-world deployment, a more pragmatic question emerges: what can it afford to do? The prevailing "always use the LLM" mentality, while showcasing raw power, is fiscally unsustainable and operationally inefficient. Large Language Models are phenomenal tools, but they come with a price tag – in tokens consumed, in milliseconds of latency, and in the hard costs of API calls. Ignoring these constraints is not just naive; it's a recipe for budget overruns, slow performance, and ultimately, failed deployments. The true intelligence of an agent isn't just about processing information, but about making smart choices within limits, treating resources as a first-class consideration, not an afterthought.

Engineering Frugality: The Core Principles of Cost-Aware Agents

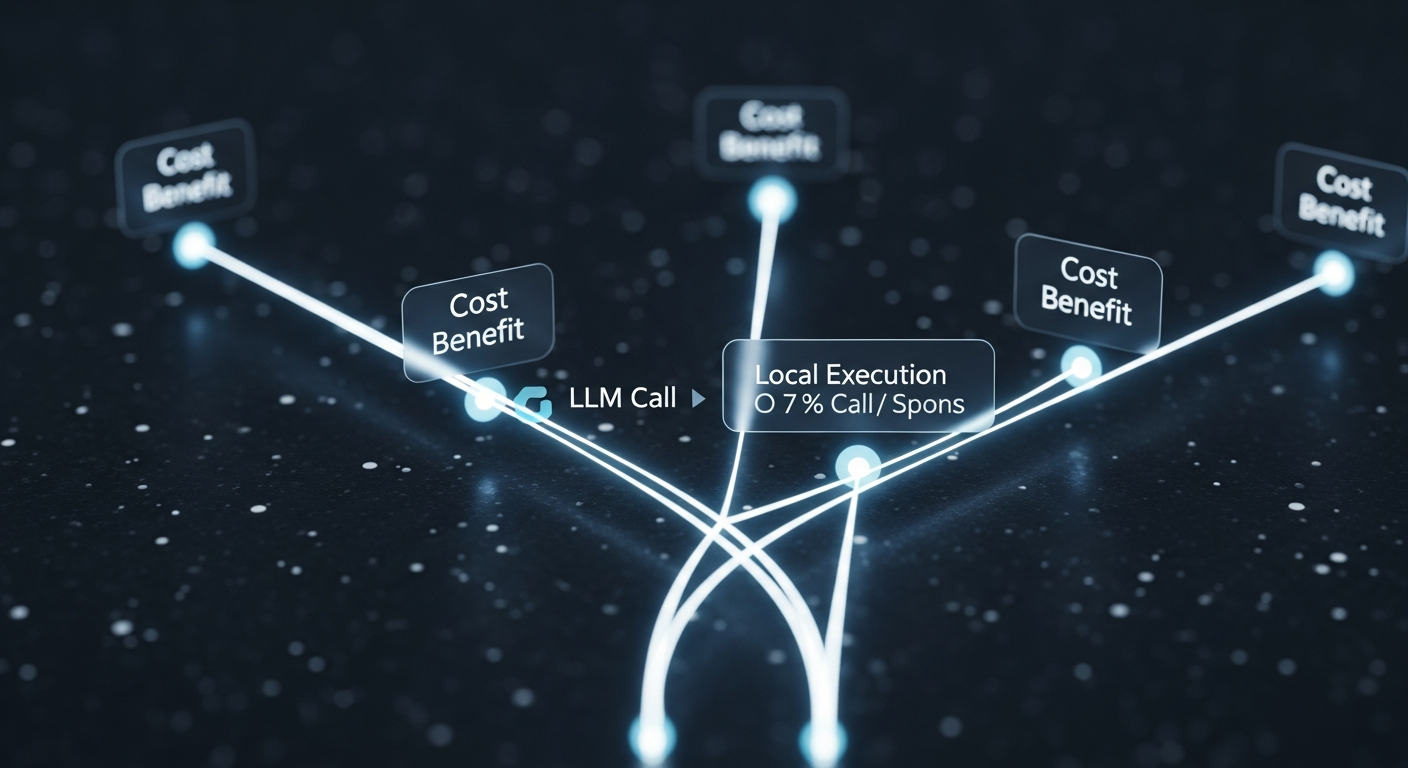

The solution lies in designing agents that are inherently cost-aware, capable of deliberate trade-offs. This isn't about dumbing down AI; it's about maturing it. At its heart, a cost-aware agent operates on a principle of informed choice. It generates a diverse array of potential actions, critically assessing each for its expected cost and benefit. Imagine an agent considering whether to query a massive LLM for a nuanced answer, or to leverage a faster, cheaper local heuristic for a sufficient one. This intelligent deliberation is what sets it apart.

Key to this architecture are robust budgeting abstractions. Tokens, latency, and tool calls are not just metrics to be passively observed; they are active variables in the decision-making process. By modeling these as first-class quantities, the agent gains the ability to accumulate, validate, and enforce spending limits throughout its operation. This foundational layer allows for a clean separation between the abstract reasoning about actions and the concrete details of their execution. Individual action choices and full plan candidates are represented with clarity, while a lightweight LLM wrapper standardizes how text is generated and measured, ensuring consistency and measurability. This approach cultivates an environment where efficiency isn't accidental, but engineered.

Strategic Planning and Dynamic Execution: Optimizing Value Under Constraints

The true ingenuity of a cost-aware agent manifests in its planning and execution phases. Instead of blindly executing the first plausible action, it engages in a sophisticated search for the highest-value combination of steps that adheres to its strict budget. This involves generating a rich set of candidate steps – including both LLM-based options for high-quality output and leaner, local alternatives for efficiency. Crucially, the system can even use the model itself to suggest low-cost improvements, expanding its action space without compromising fiscal discipline.

This planning phase often employs a beam-style search, a method that explores multiple promising paths concurrently, but with a critical addition: redundancy penalties. This ensures the agent avoids wasteful action overlap, refining its strategy to be as lean and effective as possible. This is where the agent transcends simple logic and truly becomes an optimizer, maximizing utility while staying firmly within its financial and temporal bounds.

Once a plan is selected, execution is equally deliberate. The agent dynamically chooses between local and LLM execution paths based on real-time conditions and the pre-computed plan, tracking actual resource usage step by step. This meticulous tracking allows for a crucial feedback loop: comparing estimated spend against actual consumption. This comparison validates planning assumptions and provides invaluable data for continuous refinement, ensuring the agent learns and adapts to perform even more efficiently in the future. It’s a closed-loop system of foresight, action, and retrospective analysis that drives continuous improvement.

Public Sentiment: The Demand for Responsible AI

The industry is collectively waking up to the practical implications of unchecked AI resource consumption.

-

"For too long, AI has been a black box of unpredictable costs. This focus on budgeting is a game-changer for businesses."

-

"It's not just about performance anymore; it's about sustainable, cost-effective AI. This is the practical side we've been waiting for."

-

"The idea of an AI agent weighing its options like a human project manager – considering time and money – that's truly intelligent. This is what we need for real-world adoption."

Conclusion: The Future is Fiscally Intelligent

The era of limitless AI resources is over. What was once an abstract research challenge has become a pressing economic and operational imperative. By embedding cost-awareness into the very fabric of AI agent design, we unlock systems that are not only more practical and controllable but also immensely more scalable. Treating cost, latency, and tool usage as fundamental decision variables, rather than mere afterthoughts, transforms AI from a powerful but unpredictable expenditure into a reliable, efficient, and ultimately, indispensable asset. The move from "always use the LLM" to "optimize value under constraints" represents a profound maturation of artificial intelligence – one that promises to reshape how we build, deploy, and manage intelligent systems for years to come.