The digital workplace has long been a battleground for attention, a cacophony of tabs, applications, and notifications vying for user engagement. In this context, the appeal of a centralized intelligent agent, capable of orchestrating tasks across disparate platforms, is undeniable. Anthropic, a prominent AI developer, has thrown its hat into this ring, proclaiming that its Claude chatbot, now bolstered by an extension to the open-source MCP protocol, will transform how users interact with tools like Slack, Figma, Asana, and Canva. The pitch is compelling: imagine crafting a presentation, assigning a task, or drafting a team message, all from within Claude's chat interface, sidestepping the tedious tab-switching ritual.

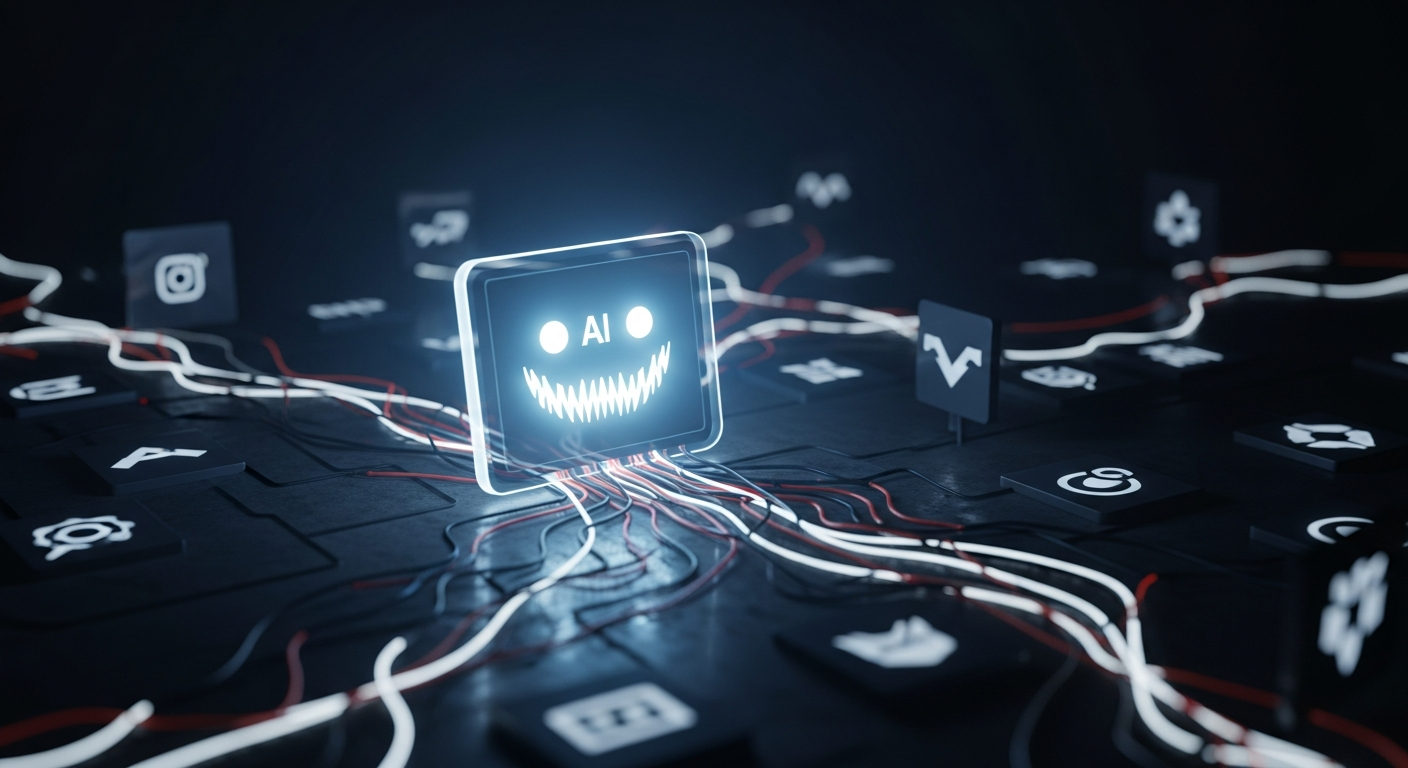

Yet, for seasoned observers of the tech industry, a familiar unease often accompanies such grand pronouncements of integration and simplification. History is replete with examples where initial promises of streamlined efficiency morph into new layers of complexity, data silos, and, ultimately, a tightening grip by the platform provider. Is Claude's new functionality a genuine leap towards a more intuitive digital existence, or is it a subtly crafted Trojan Horse, inviting users to consolidate their digital lives within Anthropic's ecosystem, thereby increasing their dependence and reducing their agency?

The Allure of Integration vs. The Reality of Interoperability

Anthropic’s announcement touts the immediate interactive nature of these new integrations, contrasting it with previous connections that merely returned text responses. While this move from static output to dynamic in-chat app interaction is certainly a technical advancement, it’s crucial to distinguish between true interoperability and a deeper form of integration that blurs the lines of application ownership. Users aren't necessarily gaining more control over their data or processes; rather, they are ceding more operational oversight to the AI agent and, by extension, to Anthropic. The 'open-source protocol' angle, while sounding reassuring, doesn't negate the potential for a 'walled garden within an open garden' scenario, where the most valuable interactions are funneled through the AI provider's specific interpretation and implementation.

Data Privacy: A New Nexus of Vulnerability

The centralizing of workflows, particularly those involving sensitive business communications and creative assets, immediately triggers alarms regarding data privacy and security. By interacting with apps directly inside Claude, users are effectively deputizing the AI as a primary intermediary for a significant portion of their digital activities. This consolidates a vast amount of potentially proprietary and personal information under one roof, or rather, within one chat interface. What are the implications for data governance? How will access permissions be managed across multiple integrated services? And critically, what are Anthropic's policies regarding the processing, storage, and potential utilization of this consolidated data? The narrative of convenience often overshadows the intricate legal and ethical considerations of such extensive data aggregation.

The Specter of Vendor Lock-In

Perhaps the most significant long-term concern is the insidious nature of vendor lock-in. As users become accustomed to, and reliant upon, the 'seamless' experience of conducting their work entirely within Claude, the effort required to migrate to an alternative AI or a different workflow strategy will inevitably increase. This creates a powerful gravitational pull towards Anthropic's platform, regardless of future innovations from competitors or shifts in user preferences. The MCP protocol, while open-source, still requires Anthropic's specific implementation to deliver this integrated experience with Claude. This strategy mirrors historical moves by tech giants that initially offered convenient, integrated services only to later leverage that dependency for competitive advantage, often at the user's expense.

Productivity Mirage: More Tools, More Complexity?

The fundamental premise is that integrating these tools will enhance productivity. However, the introduction of another layer – an AI chatbot as the primary interface for other applications – could, paradoxically, introduce new inefficiencies and cognitive load. Users now need to learn the AI's specific commands and interaction nuances for each integrated application. What happens when the AI misunderstands a request, or when an app's functionality is subtly altered or limited when accessed through Claude? The perceived simplicity could quickly give way to frustration, requiring users to revert to direct app interaction anyway, thereby undermining the very purpose of the integration. This isn't just about reducing tab-swapping; it's about whether the AI-mediated interaction truly improves the outcome, or merely adds an additional filter.

Public Sentiment: Cautious Optimism Dotted with Skepticism

Early reactions to Anthropic's announcement reveal a blend of curiosity and ingrained skepticism. On professional forums and social media, comments range from genuine interest in the time-saving potential to outright cynicism. "Another layer of abstraction I don't need," one user quipped on X. Another noted on a tech forum, "It's all about keeping you in their ecosystem. Convenient now, but what's the cost later?" There's a prevailing sentiment that while the idea of a 'super-agent' is appealing, the practical implications, especially concerning data control and potential future limitations, demand a much closer look than the marketing copy suggests. "They're not making our apps talk to us better," observed a software engineer, "they're making them talk to Claude better, and we're just along for the ride."

Conclusion: The Cost of Convenience

Anthropic's expansion of Claude's capabilities marks a significant technical achievement and a clear strategic move in the competitive AI landscape. The promise of streamlining workflows is compelling, and undoubtedly, some users will find immediate benefit. However, the industrial lens of 'Rusty Tablet' compels us to look beyond the immediate convenience and consider the broader ramifications. The 'seamless' integration of critical business tools into an AI chatbot, while superficially appealing, carries substantial risks related to data privacy, the potential for entrenched vendor lock-in, and the very real possibility of exchanging one form of digital friction for another. As the digital frontier continues to evolve, the true measure of innovation will not just be in what it enables, but in the freedoms and autonomies it preserves, or indeed, erodes. Users and organizations must weigh the undeniable allure of a unified AI assistant against the hidden costs of ceding more control to a single entity, lest they find themselves trading genuine productivity for a gilded cage.